T-Mobile store with people lined up outside

5G

T-Mobile reports more growth, but price hikes loomT-Mobile reports more growth, but price hikes loom

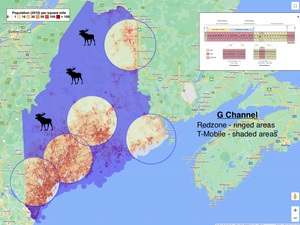

T-Mobile increased its postpaid phone customer base and its fixed wireless customer base. But company officials suggested the operator might employ some pricing hikes in the coming months.

Subscribe and receive the latest news from the industry.

Join 62,000+ members. Yes it's completely free.

.jpeg?width=700&auto=webp&quality=80&disable=upscale)

.png?width=300&auto=webp&quality=80&disable=upscale)